Cutscene - Research

Research

Throughout

all media industries, the use of three dimensional (3D) models has grown so

prevalent that it’s rare to see anything without something 3D modelled.

From big budget films to small adverts that show on TV, the use of 3D grows every day. This is because 3D modelling allows a level of freedom, creativity

and realism that cannot always be achieved through real life footage such as

in; Fantasy, Sci-Fi or Historical features. 3D can also be used to simply enhance

what is already on screen.

Throughout

all media industries, the use of three dimensional (3D) models has grown so

prevalent that it’s rare to see anything without something 3D modelled.

From big budget films to small adverts that show on TV, the use of 3D grows every day. This is because 3D modelling allows a level of freedom, creativity

and realism that cannot always be achieved through real life footage such as

in; Fantasy, Sci-Fi or Historical features. 3D can also be used to simply enhance

what is already on screen.

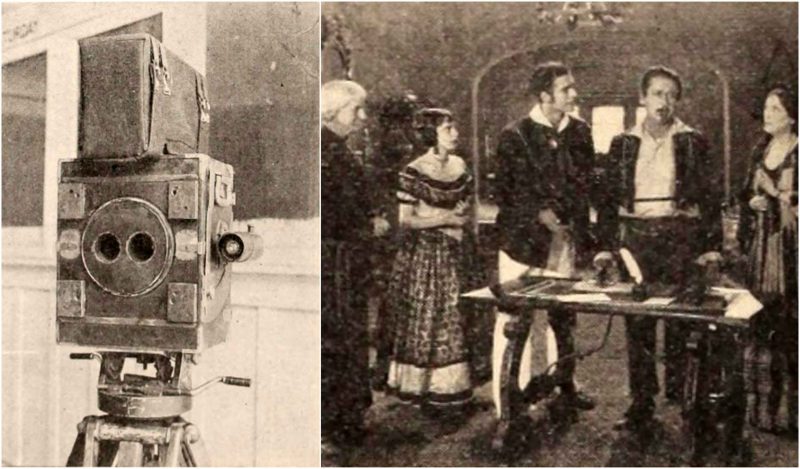

3D has been used in entertainment for almost a hundred

years, with the first ever 3D film debuting in 1922. This film was “The

Power of Love” and was displayed in 3D by using an early form of 3D

imaging called ‘anaglyph’. This is the use of two

different images taken a few centimeters apart and displayed through a red and

blue or red and green filter so that two images appear on screen. The viewer

must then wear anaglyph glasses that have one lens of the corresponding colour

so that when the screen is viewed, the focal distance of the average human

refocuses the images making them appear in 3D. This technique was then used for

a number of decades until technology was advanced enough to allow use of

digital modelling techniques. As can be seen in image [1], the camera used at

the time has two viewing ports set a few inches apart. This is to mimic the

distance of human eyes so that when the dual colour glasses are worn, the image

can appear as 3D.

Although

this was the first use of 3D, most studios abandoned the feature until the 1970’s

and 80’s. During this time, a number of films were released

to try and rekindle the old style of anaglyphs but were usually met with poor

reviews and most would end up being forgotten by the public. During the late 80’s,

Japanese developers produced allowed for the display of 3D footage on standard

playback devices in the home. This too was short-lived as there were only a

dozen titles for this system and it was discontinued in the early 90’s.

Although

this was the first use of 3D, most studios abandoned the feature until the 1970’s

and 80’s. During this time, a number of films were released

to try and rekindle the old style of anaglyphs but were usually met with poor

reviews and most would end up being forgotten by the public. During the late 80’s,

Japanese developers produced allowed for the display of 3D footage on standard

playback devices in the home. This too was short-lived as there were only a

dozen titles for this system and it was discontinued in the early 90’s.

During the mid-1980’s, the film format of ‘IMAX’ was produced which allowed for a better 3D experience than the spew of B-Class films from a few years before. 3D IMAX films were originally released as a ‘special event’ type of thing with audience members viewing on an impressively large screen and higher quality imaging. The use of glasses was also updated, going from the flimsy cardboard red/blue or simple polarized glasses to using active LCD shutter glasses [3]. These worked by using a transmitter that synchronized with the screen’s display rate. Since they were a powered device, they were somewhat bulkier then the previous counterparts but displayed a superior image and 3D effect, so they were preferred for the most part.

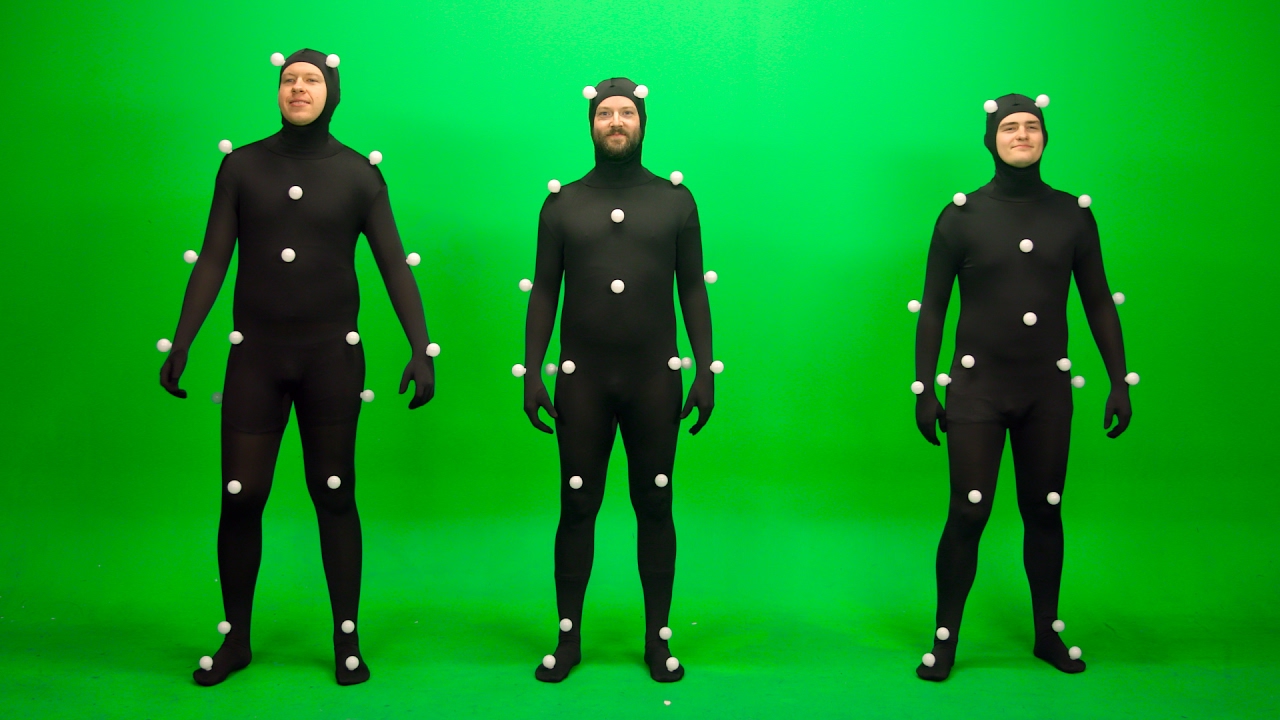

These suits have evolved over the

twenty to thirty years they have been in use. The suits were originally a skin

tight suits with small white balls placed in key locations to allow for a

digital skeleton to be made from their movements. This evolved into suits with

much smaller reference points with many more on the body to better capture the

movements of the characters with spots added to the faces of actors allowing

for the mimicry of facial expressions.

Video game developers have mostly been the pioneers in the use of 3D since the first 3D game with the release of ‘Battlezone’, a simplistic tank shooter game with 3D wireframe graphics [6]. This paved the way for future generations of 3D gaming. Games used a very simple version of 3D similar to Battlezone, but when box office hits were released such as ‘Crash Bandicoot’, ‘Spyro the Dragon’ and ‘Lara Croft: Tomb Raider’ the industry suddenly rocketed forwards into 3D being the standard for most games.

The problem with 3D games from this period is that the technology capable of

running 3D was very basic and to do it with any great level of detail took

technology that was not available for home use. Because of this, early 3D games

used a lot of shortcuts to allow the games to run in 3D whilst still being

applicable for home use. In the original Tomb Raider game, the main character

had such a low poly count, her face was almost flat and everywhere that should

have been round and curved instead came to a prominent point [7].

Despite this, the fact that any game could be made 3D outweighed this downside

and people still thought of the graphics as groundbreaking at the time.

The problem with 3D games from this period is that the technology capable of

running 3D was very basic and to do it with any great level of detail took

technology that was not available for home use. Because of this, early 3D games

used a lot of shortcuts to allow the games to run in 3D whilst still being

applicable for home use. In the original Tomb Raider game, the main character

had such a low poly count, her face was almost flat and everywhere that should

have been round and curved instead came to a prominent point [7].

Despite this, the fact that any game could be made 3D outweighed this downside

and people still thought of the graphics as groundbreaking at the time. In our current generation, the use of 3D in games is phenomenal. With a powerful enough PC, games can be run in such high detail that it can sometimes be hard to tell whether or not the image is a digital render or a real-life photograph. With the up and coming release of games such as ‘Battlefield 5’ the gaming industry has never been more visually stunning. The graphics card production company ‘NVIDIA’ has developed a new system which allows for real-time ray tracing. This means that the game will render light rays as they are produced but only in relevant areas. This allows for dynamic reflections, hyper-realistic shadows and near perfect texturing on all assets.

A technique that is often used

by companies to create a 3D object is by scanning in a real object. This is

process where an object is made in the real world by hand such as a sculpture

or by collecting items that can be as simple as a lightbulb or screwdriver and

using a device to scan a copy of said object into a digital space. This technique

is usually reserved for very complex shapes that would be time consuming and

costly to develop as a model, therefore it is easier to create a model in the

real world out of clay or sometimes stone like from a statue and then scan it

in to be used as a model.

A technique that is often used

by companies to create a 3D object is by scanning in a real object. This is

process where an object is made in the real world by hand such as a sculpture

or by collecting items that can be as simple as a lightbulb or screwdriver and

using a device to scan a copy of said object into a digital space. This technique

is usually reserved for very complex shapes that would be time consuming and

costly to develop as a model, therefore it is easier to create a model in the

real world out of clay or sometimes stone like from a statue and then scan it

in to be used as a model.  The use of

3D isn’t always as simple as creating an asset and placing it

into a scene, sometimes the 3D that is put into place has to meld seamlessly

into the scene that is already there. A lot of films shot as live-action use 3D

modelling to help build the scenery.

The use of

3D isn’t always as simple as creating an asset and placing it

into a scene, sometimes the 3D that is put into place has to meld seamlessly

into the scene that is already there. A lot of films shot as live-action use 3D

modelling to help build the scenery.  One example of this being done to a high standard is

in the 2015 film ‘Mad Max Fury Road’. For

the scene in which a character is being chased through a canyon, although it looks as real as

can be, the original, un-edited footage is much different. The scene was filmed

in Namibia across a hilly, sandy area with mounds not much bigger than the vehicles

themselves. This was then changed in production into the dangerous looking

ravine area. This was done because it is both difficult and dangerous to film

in such a location.

One example of this being done to a high standard is

in the 2015 film ‘Mad Max Fury Road’. For

the scene in which a character is being chased through a canyon, although it looks as real as

can be, the original, un-edited footage is much different. The scene was filmed

in Namibia across a hilly, sandy area with mounds not much bigger than the vehicles

themselves. This was then changed in production into the dangerous looking

ravine area. This was done because it is both difficult and dangerous to film

in such a location. 3D

technology has also become so advanced that there are companies that produce

exclusively 3D animated films and are highly profitable in their efforts. Some

of the biggest companies that do this are ‘Pixar’ and

‘Dreamworks’. These two companies make

animated feature films of such a high caliber that they have both become a

worldwide household name. Pixar is renowned using a more cartoonish style with

their models, having made such films as ‘Toy

Story’, ‘The Incredibles’ and

‘Finding Nemo’. These films have characters

that are either personified creatures or superheroes with unobtainable body

proportions. This style works well because Disney has a primary audience of

young children and families which means the art style appeals more to the

younger audience.

3D

technology has also become so advanced that there are companies that produce

exclusively 3D animated films and are highly profitable in their efforts. Some

of the biggest companies that do this are ‘Pixar’ and

‘Dreamworks’. These two companies make

animated feature films of such a high caliber that they have both become a

worldwide household name. Pixar is renowned using a more cartoonish style with

their models, having made such films as ‘Toy

Story’, ‘The Incredibles’ and

‘Finding Nemo’. These films have characters

that are either personified creatures or superheroes with unobtainable body

proportions. This style works well because Disney has a primary audience of

young children and families which means the art style appeals more to the

younger audience.  Dreamworks on the other hand has a reputation for making

films that are so high in quality it is possible to see individual hairs even

in small areas such as eyebrows and eyelashes. However, they do still use a

more cartoonish style, just not as disproportionate as Pixar. The most notable

films that Dreamworks have made are ‘How to Train Your Dragon’, ‘Shrek’ and

‘Kung Fu Panda’. While the main characters in

these are either not human or in the case of the first one, a literal Dragon,

they are still much more realistic in the ways they move and look.

Dreamworks on the other hand has a reputation for making

films that are so high in quality it is possible to see individual hairs even

in small areas such as eyebrows and eyelashes. However, they do still use a

more cartoonish style, just not as disproportionate as Pixar. The most notable

films that Dreamworks have made are ‘How to Train Your Dragon’, ‘Shrek’ and

‘Kung Fu Panda’. While the main characters in

these are either not human or in the case of the first one, a literal Dragon,

they are still much more realistic in the ways they move and look. The style

of 3D modelling influences the feel of the game massively. Whether it’s a

child friendly game or an action-packed gore fest, if the modelling isn’t in

keeping with the feel of the game, nothing would work. Using the example of the

recent remake of ‘Doom’, it is possible to see this

at play. From the first moment the player begins, they are bombarded by sights

and experiences and because of this, the modelling has to follow suit. Whether

it’s in an industrial area or a rocky, mountainous

region, a rule can be created of having areas full of sharp outcrops or various

forms of structure covering almost every available space. It helps to encourage

to frantic pace the game sets by further throwing visual information at the

player.

The style

of 3D modelling influences the feel of the game massively. Whether it’s a

child friendly game or an action-packed gore fest, if the modelling isn’t in

keeping with the feel of the game, nothing would work. Using the example of the

recent remake of ‘Doom’, it is possible to see this

at play. From the first moment the player begins, they are bombarded by sights

and experiences and because of this, the modelling has to follow suit. Whether

it’s in an industrial area or a rocky, mountainous

region, a rule can be created of having areas full of sharp outcrops or various

forms of structure covering almost every available space. It helps to encourage

to frantic pace the game sets by further throwing visual information at the

player. The enemies of this game also follow a similar rule. Since the game is a 3D High Definition (HD) remake, all the enemies are based off the old 2D sprites from the original Doom game. From the basic grunts to the big bosses, they are all a hellish abomination of bone and flesh. The enemies called ‘Beholders’ are a floating mass of meat covered in boney horns with a giant mouth and one large eyeball. From this description alone, it is possible to understand that these creatures are meant to be if not scary then gruesome. The game encourages the player to play recklessly and aggressively and having an enemy like this coming towards you makes you want to take it down as quick as possible. If these creatures were modeled as a smiling balloon for example, it would take almost all the interest out of fighting them, this is why the modelling style must fit in with the game.

Following the same idea but with a completely different style of game, ‘Overwatch’ has been critically acclaimed with its models. These being so diverse and unique between every character that nothing more than a quick glance is needed to know which character you’re looking at. There is more reason to this than just ‘artistic flair’ however, this is almost a necessity because the game is heavily based around competitive gameplay and knowing which character should be focused to kill first. If the characters looked almost alike to one another, this would make it much harder to distinguish between what characters are a priority. It also helps to make the game more dynamic. Have such a dynamic roster of characters to learn help increase complexity but even more than this, the different size models impact gameplay directly. Since the characters range from a knight in very large armour down to a young and small woman, the hitboxes cause the heroes with more health to be easier to hit whilst those who will die much quicker a better chance of survival thanks to the smaller model.

The maps for this game also impact how it is played. Since the game focuses so much on the competitive aspect of a six versus six game, the maps can’t be too distracting for the player whilst still keeping in the style of where it is set as all maps in this game are set in the real world. Having a simpler design means the players will automatically focus more on the enemy team and less on what the surrounding environment looks like.

Doing this was a good choice by the developers because it has another benefit

of being much easier to run for lower power PC’s. This

is another big reason why modeling has to have so much thought put into it.

Overwatch is designed to be fast paced with a lot of action going on at almost

all times, because of this, if the models were very high poly, even the

stronger PC’s would struggle to run smoothly.

Doing this was a good choice by the developers because it has another benefit

of being much easier to run for lower power PC’s. This

is another big reason why modeling has to have so much thought put into it.

Overwatch is designed to be fast paced with a lot of action going on at almost

all times, because of this, if the models were very high poly, even the

stronger PC’s would struggle to run smoothly.A pre-rendered cutscene is created long before a game or production is released because it can take literal hours to render out just a few minutes, or even seconds, of footage. Because of this the rendering is done while production is still ongoing and cannot be feasibly done by any computer someone owns. The benefits of doing this though is that a very high amount of detail can be used. Since the user’s computer doesn’t have to render the scene, developers can put in detailed lighting, high poly models and complex shaders to make the scene look as detailed and impressive as they require. This makes them good for large cinematic sequences that are key to building story in the game. Any time a film or series is produced it is pre-rendered for the convenience and use of the general public. One downside to using a pre-rendered cutscene is that there is no way to interact with anything that’s going on in the scene.

A cutscene that is rendered in real time, however, does have the ability to be interacted with. Since a real time render is being created in the moment by the user’s PC, the player is able to have a level of intractability with the cutscene. Because the game would need to render this at the same time as everything else in the area, the quality of both the animation and visual effect is fairly diminished compared to a pre-rendered cutscene. The lighting and modeling has to be quite minimal and simplistic in most cases thanks to the need to render a lot of things at once in a dynamic space. The upside however is that they can be played within the scene almost no matter where the character is. Examples of these are things such as the animations that happen when a player opens a lock [21] or when the player can move the camera or even the character while the cutscene is playing.

The first step of the pipeline is the pre-production.

At this stage the product is nothing more than an idea, it may have a few

concept sketches made by the person who pitched it but other than this, there

is nothing more than an idea. This idea must be then taken before a board of

developers who will decide whether they think it’s a

good enough idea to spend the time, funds and resources into the project. If

they think the pitch has potential, it will then be sent on to have concept

sketches, if none have been made already, and a storyboard allowing developers

to better see how the product will pan out. After the storyboard is complete,

it is turned into an animatic by putting every frame in order for the correct

amount of time as well as adding very basic sounds into the animatic which can

sometimes be nothing more than the artists or editors using their voices to

make the sounds. At this stage, the production can be shut down if the

developers don’t feel the animatic has any real future as a

production.

The first step of the pipeline is the pre-production.

At this stage the product is nothing more than an idea, it may have a few

concept sketches made by the person who pitched it but other than this, there

is nothing more than an idea. This idea must be then taken before a board of

developers who will decide whether they think it’s a

good enough idea to spend the time, funds and resources into the project. If

they think the pitch has potential, it will then be sent on to have concept

sketches, if none have been made already, and a storyboard allowing developers

to better see how the product will pan out. After the storyboard is complete,

it is turned into an animatic by putting every frame in order for the correct

amount of time as well as adding very basic sounds into the animatic which can

sometimes be nothing more than the artists or editors using their voices to

make the sounds. At this stage, the production can be shut down if the

developers don’t feel the animatic has any real future as a

production.If the animatic gets cleared and development can move forward, everything moves onto production. This is where the biggest bulk of work is done. The first thing that must be done is research and development (R&D) where the production team carries out research into what work they need to do, such as model ideas, themes, environments and ideas of how to build the project. Following this is the modeling portion of development, this combined with the texturing, rigging and animating makes up the largest section of work.

This is where the models

get made by the company to the specifications from the R&D. With the models

complete they get textured and rigged so that they are fully ready to be

implemented into the scene. At this stage, the animating can finally commence.

This takes up a long time because a lot off effort needs to go into every frame

and in most animations, there are hundreds and thousands of frames. With the

animating complete, the final step of production is to add the visual (VFX) effects

and lighting. This is things such as smoke, fire, any particle effects and

ensuring the lights looks as realistic as possible. The animations finally get

rendered out and sent off for the final stage of production.

This is where the models

get made by the company to the specifications from the R&D. With the models

complete they get textured and rigged so that they are fully ready to be

implemented into the scene. At this stage, the animating can finally commence.

This takes up a long time because a lot off effort needs to go into every frame

and in most animations, there are hundreds and thousands of frames. With the

animating complete, the final step of production is to add the visual (VFX) effects

and lighting. This is things such as smoke, fire, any particle effects and

ensuring the lights looks as realistic as possible. The animations finally get

rendered out and sent off for the final stage of production. Before

being sent out for public viewing all the post production must be completed,

the rendered animation must first be composited into one sequence. This is done

by collecting all the rendered scenes and editing them together seamlessly to

look like one clip. The editors will finally work with foley artists to add

realistic sounds to the animation. Once composited into one sequence, the files

are sent off to either a colour correction studio or first into 2D VFX to add

any extra details over the top of the production, otherwise they are sent to

ensure the colour of the sequences are realistic and correct in how they are

shown. Once all of this effort has been completed, the product can be finally

rendered and exported into the final product and sent out for public viewing.

Before

being sent out for public viewing all the post production must be completed,

the rendered animation must first be composited into one sequence. This is done

by collecting all the rendered scenes and editing them together seamlessly to

look like one clip. The editors will finally work with foley artists to add

realistic sounds to the animation. Once composited into one sequence, the files

are sent off to either a colour correction studio or first into 2D VFX to add

any extra details over the top of the production, otherwise they are sent to

ensure the colour of the sequences are realistic and correct in how they are

shown. Once all of this effort has been completed, the product can be finally

rendered and exported into the final product and sent out for public viewing.

References

http://vertexmodelling.co.uk/wp-content/uploads/2016/04/Vertex-Modelling-Arch-of-Triumph-04.jpg [24]

Comments

Post a Comment